Rendering the Metaverse

Over the last year, Fortnite has pushed the boundaries for large scale synchronous online virtual worlds via concerts (Travis Scott and Ariana Grande on Fortnite). In hindsight, these concerts will be looked back on as the first mainstream precursors to the full blown Metaverse (shout out to The Sims and Second Life for trying this before the world was ready).

But there are a lot of problems with Fortnite concerts. Some of the most notable shortcomings include:

- The experience is entirely controlled by a single entity, Epic Games, the creator of Fortnite (though to be clear, we are big fans of Epic)

- The number of people who can interact in a single space is capped at ~150, so the vast majority of audience members are participating in different versions of what would ideally be a shared experience

- The IP of the events themselves, and any derivative outputs, are also owned by Epic

I don’t say these things to fault Epic. In fact, I applaud Epic for pioneering these efforts. Epic CEO Tim Sweeney has spoken at length about the need to build the Metaverse, and he has repeatedly emphasized that the Metaverse should not be corporate controlled, but rather should be credibly neutral.

The mediation of these virtual worlds via centralized parties is inherently against the ethos of the open metaverse. Walled gardens create platform lock-in, intermediary costs, and most importantly, make it more difficult for assets and experiences to interoperate via composable crypto primitives. Each of these limits the creation of, engagement with, and monetization around the newer, ambitious kinds of media we expect in the metaverse.

In order to really scale the Metaverse to billions of people, we are still missing some key pieces of technical infrastructure. At a minimum, we need:

- Scalable blockchains that are optimized for DeFi and that maintain composability (e.g., Solana)

- Scalable public datastores for data-centric (as opposed to finance-centric) applications (e.g. Ceramic and Textile Threads)

- The ability to perform large trust-minimized computations over public data sets and user data streams (e.g. Fluence)

- Immense amounts of off-chain computation to render trillions of digital objects in high fidelity (e.g., Render Network)

- A permanent file store to store digital representations of assets (e.g., Arweave)

- Real time indexing + querying systems to retrieve data and files on demand

- Robust AR and VR modalities.

Blockchains and other web3 systems are the substrate for the Metaverse. We have been thinking about these problems for a long time, and have written about the Web3 Stack on a number of occasions. The stack is maturing, becoming ever increasingly capable of supporting Metaverse experiences.

Today I’m excited to announce that Multicoin Capital led a $30M round into the Render Network, to solve problem #4 enumerated above. Our friends at Alameda Ventures, Sfermion, and The Solana Foundation also participated, along with angels Vinny Lingham and Bill Lee.

Rendering Trillions of Objects

Creating 3D or holographic digital media is computationally intensive. Most of the digital art created today, including most 3D NFTs, requires multiple hours of dedicated GPU time. These resources are required for rendering, the process of simulating light rays across surfaces, textures, and materials programmed in raw scene files to generate photorealistic images. The computational demands of rendering are significant, and grow exponentially as standards for resolution and frame rate increase to create high fidelity virtual worlds.

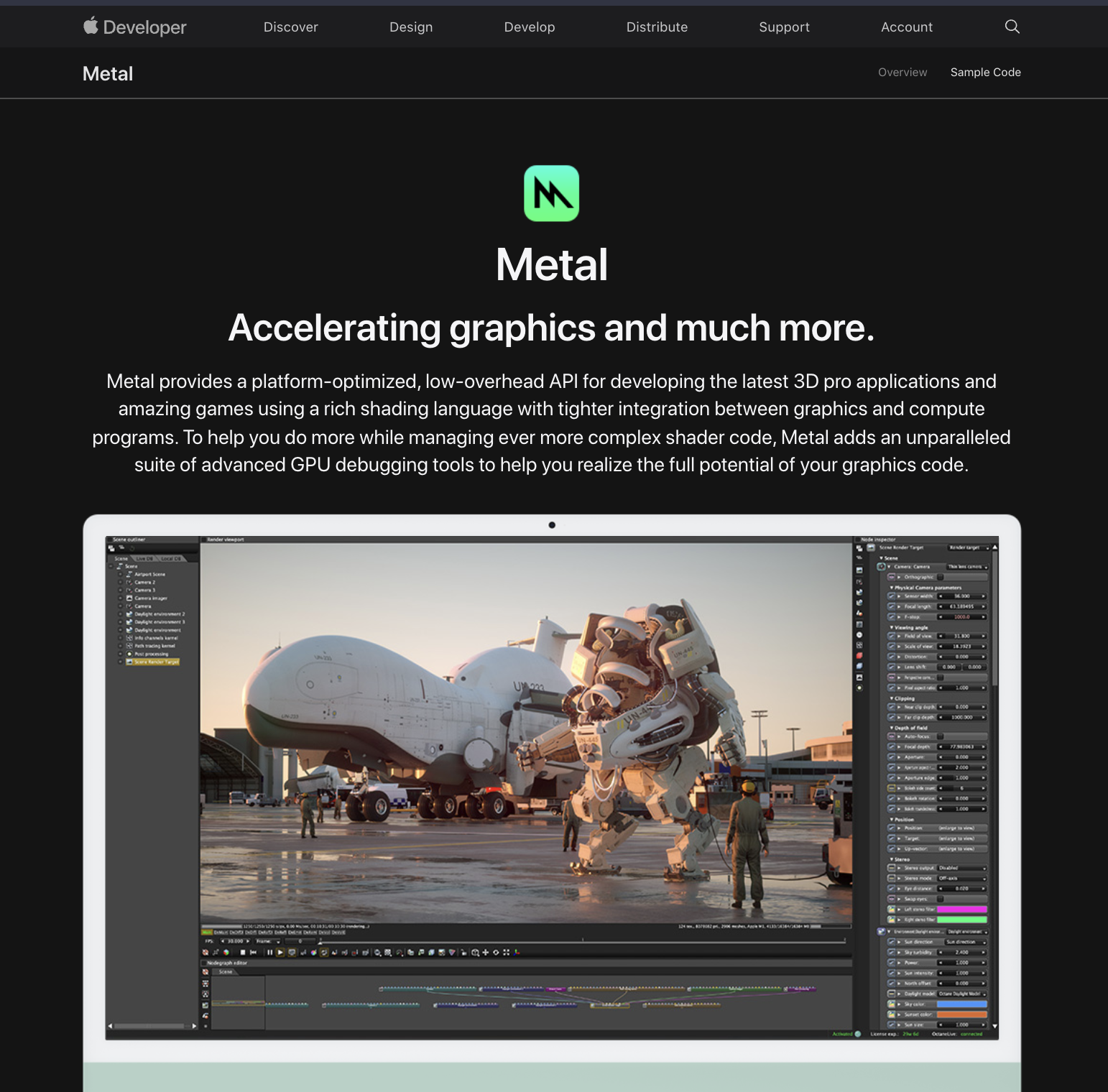

OTOY has been at the forefront of rendering software for over a decade. Their flagship app, Octane, was the first GPU-accelerated, unbiased, physically correct renderer and is widely considered to be best-in-class. All the major movie studios including Disney use Octane for VFX, along with tens of thousands of digital artists including Beeple, Pak, and FVKRNDR. The title sequence of Westworld, Lil Nas X’s music video for MONTERO, and the collections at the Frieze Viewing Room in Los Angeles were all rendered with Octane. Apple features Octane above the fold on the Metal homepage.

Jules Urbach, OTOY’s founder and CEO, developed a vision for the Metaverse over a decade ago when he founded OTOY in 2008. Over the last 13 years, OTOY built the content creation tools and rendering engines that are enabling the creation of trillions of objects that will comprise the metaverse.

In order to build the Metaverse, content creation tools and rendering engines alone are not enough. The Metaverse is going to require astronomical amounts of computational power: orders of magnitude more than is available in all of the world’s data centers combined.

The only way to access that much computational power is to tap into the hundreds of millions of latent GPUs that consumers have around the world. The Render Network launched in 2017 to tap into this latent supply. Render Network allows complex GPU based render jobs to be distributed and processed over a large, distributed peer-to-peer network. This gives creators access to compute at a scale and price that is unprecedented, along with privacy guarantees that are stronger than any incumbent cloud provider.

We have been investing in infinitely scalable Web3 primitives over the last few years, most notably, Arweave, and Livepeer. Each of these primitives focuses on a very specific functional task. Arweave provides permanent storage. And Livepeer decentralizes video transcoding.

The Render Network is optimized to run bounded (not open ended), highly-parallelizable tasks that don’t require synchronous network connections. The most common workloads that meet those criteria are digital rendering and training models for machine learning.

Today, the Render Network supports the major digital content creation tools, including Cinema4D, 3DS Max, Unreal Engine and Unity. In the near future, it will support rendering engines such as Arnold and Redshift in addition to Octane. The ultimate aim of the network is to support any arbitrary workload that conforms to the network’s ORBX scene data standard, and to plug in seamlessly with any number of signaling and marketplace platforms to foster the creation and maintenance of rich, immersive digital content.

Each incremental data and bandwidth product improvement, license expansion, and order matching augmentation increases the addressable market for the Render Network. The Render Network produced physically accurate renderings of the Gene Roddenberry Star Trek archives, Beeple’s Everydays, and the Star Trek scenes (29:19 - 29:38), as seen in Apple’s October 2021 keynote, exponentially faster and more cost effectively than using incumbent cloud providers. The Render Network is live, and is powering some of the largest and highest profile rendering jobs in the world.

The Render Network also stores a hash of all assets the system uses to build the source render graph for the job to be completed. This has novel implications, and creates another layer of provenance prior to tokenization. Such a primitive, combined with a permanent storage infrastructure such as Arweave, has the potential to drastically alter our collective understanding of genesis and ownership of digital objects.

A Billion Dollar Arbitrage

It is widely known that renting GPUs from any of the major cloud providers—AWS, GCP, Azure, etc.—is expensive, and it’s frequently impossible to rent in large quantities.

Capitalism dictates that the cloud providers should respond to market demand. And yet, they don’t. What’s going on?

The cloud providers are not at fault. Instead, the issue is hardware: Nvidia will not allow their flagship consumer graphics cards (e.g. 2080 or 3080) to be deployed with cloud providers. Instead, they sell their workstation cards, under the Tesla or Quadro brand, which are up to 10x as expensive, but only provide 20-25% more computational power.

The Render Network therefore capitalizes on a multi-billion dollar arbitrage. By unlocking hundreds of millions of otherwise latent consumer grade GPUs, the Render Network can dramatically reduce the cost of GPU-based computation, increase total number of renderings, and decrease time required to complete rendering jobs.

Looking Ahead

The Render Network launched in 2017, but very few crypto investors have even heard of it. I hadn’t until just a few months ago. The Render Network has been operating in somewhat of a closed beta for the last few years. The Octane and Render Network community have been optimizing the system for a few years to ensure an optimal experience for creators.

At Solana’s Breakpoint conference in early November, the Render team announced that they are moving the RNDR token and major parts of the Render network to the Solana blockchain. This migration will occur over the course of 2022, and will unlock a ton of amazing opportunities as 3rd party developers build higher level applications using the Render Network as a composable crypto primitive.

/Conversations From The 2021 Multicoin Summit [VIDEOS]

.jpg?u=https%3A%2F%2Fimages.ctfassets.net%2Fqtbqvna1l0yq%2F6jWCv8UL2kFPJizX6Lo2lh%2F718c8af0338d01af3ad120ffe8378314%2Fjohn_robert__1_.jpg&a=w%3D25%26h%3D25%26fm%3Djpg%26q%3D75&cd=2019-04-23T15%3A05%3A11.951Z)

The 2021 Multicoin Summit was our biggest yet. For those who attended, it was fantastic to see all of you in real life again after two years of quarantine. For those who couldn’t make it, we prepared the following videos below.

.jpg?u=https%3A%2F%2Fimages.ctfassets.net%2Fqtbqvna1l0yq%2F6jWCv8UL2kFPJizX6Lo2lh%2F718c8af0338d01af3ad120ffe8378314%2Fjohn_robert__1_.jpg&a=w%3D25%26h%3D25%26fm%3Djpg%26q%3D75&cd=2019-04-23T15%3A05%3A11.951Z)

Disclosure: Unless otherwise indicated, the views expressed in this post are solely those of the author(s) in their individual capacity and are not the views of Multicoin Capital Management, LLC or its affiliates (together with its affiliates, “Multicoin”). Certain information contained herein may have been obtained from third-party sources, including from portfolio companies of funds managed by Multicoin. Multicoin believes that the information provided is reliable and makes no representations about the enduring accuracy of the information or its appropriateness for a given situation. This post may contain links to third-party websites (“External Websites”). The existence of any such link does not constitute an endorsement of such websites, the content of the websites, or the operators of the websites.These links are provided solely as a convenience to you and not as an endorsement by us of the content on such External Websites. The content of such External Websites is developed and provided by others and Multicoin takes no responsibility for any content therein. Charts and graphs provided within are for informational purposes solely and should not be relied upon when making any investment decision. Any projections, estimates, forecasts, targets, prospects, and/or opinions expressed in this blog are subject to change without notice and may differ or be contrary to opinions expressed by others.

The content is provided for informational purposes only, and should not be relied upon as the basis for an investment decision, and is not, and should not be assumed to be, complete. The contents herein are not to be construed as legal, business, or tax advice. You should consult your own advisors for those matters. References to any securities or digital assets are for illustrative purposes only, and do not constitute an investment recommendation or offer to provide investment advisory services. Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by Multicoin, and there can be no assurance that the investments will be profitable or that other investments made in the future will have similar characteristics or results. A list of investments made by venture funds managed by Multicoin is available here: https://multicoin.capital/portfolio/. Excluded from this list are investments that have not yet been announced due to coordination with the development team(s) or issuer(s) on the timing and nature of public disclosure. Separately, for strategic reasons, Multicoin Capital’s hedge fund does not disclose positions in publicly traded digital assets.

This blog does not constitute investment advice or an offer to sell or a solicitation of an offer to purchase any limited partner interests in any investment vehicle managed by Multicoin. An offer or solicitation of an investment in any Multicoin investment vehicle will only be made pursuant to an offering memorandum, limited partnership agreement and subscription documents, and only the information in such documents should be relied upon when making a decision to invest.

Past performance does not guarantee future results. There can be no guarantee that any Multicoin investment vehicle’s investment objectives will be achieved, and the investment results may vary substantially from year to year or even from month to month. As a result, an investor could lose all or a substantial amount of its investment. Investments or products referenced in this blog may not be suitable for you or any other party. Valuations provided are based upon detailed assumptions at the time they are included in the post and such assumptions may no longer be relevant after the date of the post. Our target price or valuation and any base or bull-case scenarios which are relied upon to arrive at that target price or valuation may not be achieved.

Multicoin has established, maintains and enforces written policies and procedures reasonably designed to identify and effectively manage conflicts of interest related to its investment activities. For more important disclosures, please see the Disclosures and Terms of Use available at https://multicoin.capital/disclosures and https://multicoin.capital/terms.